Table of Contents

Auditing ISO 9001/AS 9100, sec. 7.1.5 (Calibration)

Test your knowledge and auditing skills to properly assess ISO 9001 / AS9100, sec. 7.1.5 by reviewing the following series of common scenarios encountered by both internal and external ISO 9001 / AS9100 auditors. Most of the technical information provided would be obvious to any 1st year (entry-level) metrologist (calibration technician). Also, ALL of the scenarios presented are taken from real-world situations I have personally encountered.

Scenarios

Read the below scenarios and decide whether each is a nonconformity. And if so, decide whether it is a minor or a major nonconformity (Ref. "Classifying Nonconformities (ISO 9001 & AS9100)").

Click on the individual “Scenario” link (below) to “jump” to a discussion of that scenario and my opinions.

Scenario #1: You discover that the calibration status label on a micrometer is past its expiration (due) date.

Scenario #2:  You observe production personnel in a machine shop verifying products using instruments marked “For Reference Only”. Upon asking why none of these instruments have been calibrated, the company guide informs you that production personnel are required to take the 3 items to the “Quality Inspectors”, located in a lab. Using calibrated instruments, the “Quality Inspectors” verify the first piece from each production run, then a random piece (near the middle of the production run), and then verify the final piece. Therefore, there is no need for the production personnel to have calibrated instruments.

You observe production personnel in a machine shop verifying products using instruments marked “For Reference Only”. Upon asking why none of these instruments have been calibrated, the company guide informs you that production personnel are required to take the 3 items to the “Quality Inspectors”, located in a lab. Using calibrated instruments, the “Quality Inspectors” verify the first piece from each production run, then a random piece (near the middle of the production run), and then verify the final piece. Therefore, there is no need for the production personnel to have calibrated instruments.

Scenario #3:  While visiting an inspection area in a powder processing plant, you observe a number of test sieves labeled “Special Calibration” with no calibration due date. Upon asking what a “special calibration” is, the company guide informs you that the test sieves consist of a stainless steel mesh that is more than sufficient to withstand any wear that the product being tested might exert on it. Therefore, the sieves are considered “single calibration devices”. However, the inspectors visually verify that there has not been any breakage or distortion of the mesh prior to each use. The company guide then presents you with a sampling of the small calibration certificates, some of which are several years old, that accompany each test sieve. You see that each test sieve has been calibrated according to ASTM E11, ISO 565 & ISO 3310-1.

While visiting an inspection area in a powder processing plant, you observe a number of test sieves labeled “Special Calibration” with no calibration due date. Upon asking what a “special calibration” is, the company guide informs you that the test sieves consist of a stainless steel mesh that is more than sufficient to withstand any wear that the product being tested might exert on it. Therefore, the sieves are considered “single calibration devices”. However, the inspectors visually verify that there has not been any breakage or distortion of the mesh prior to each use. The company guide then presents you with a sampling of the small calibration certificates, some of which are several years old, that accompany each test sieve. You see that each test sieve has been calibrated according to ASTM E11, ISO 565 & ISO 3310-1.

Scenario #4: Upon reviewing a calibration certificate, you discover that it does not contain NIST “traceability” number(s).

Scenario #5: You observed a micrometer being used in the production area. Upon reviewing its calibration record, you see that it was calibrated in-house using a set of Gage Blocks. In order to verify metrological traceability, you review the calibration record for the Gage Blocks and discover that they too, had been calibrated in-house… using a Precision Micrometer. Continuing to investigate metrological traceability, you review the calibration record for the Precision Micrometer and discover that it had been calibrated in-house… using that same Gage Block set. The Precision Micrometer and Gage Blocks were being used to calibrate each other.

Scenario #6: Similar to the above scenario, but at a different company, you discover that the company is using a Starrett W733.1XFL-1 Wireless Electronic Micrometer to calibrate their class AS-2 Gage Block set (stainless steel, rectangular 0.010“ to 2”). While the Starrett W733.1XFL-1 Micrometer Calibration certificate contains minimal information, you notice that it has a digital display with a resolution of 0.00005“. To verify that the Test Accuracy Ratio (T.A.R.) is sufficient, you see that the Gage Blocks Calibration Certificate indicates an accuracy of ±10 μin.” (0.00001).

Scenario #7: You observed a weighing scale with a calibration status label. Upon examining its associated Calibration Certificate, you notice that the certificate was issued to a different address than the location where the audit is being performed. Upon asking about this discrepancy, you're informed that the weighing scale was calibrated at a different location and shipped to the current location.

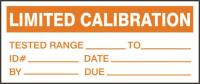

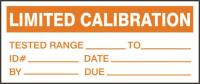

Scenario #8:  You observed calipers being used throughout the production process, and observed that the majority of these calipers supported the “Step” dimensional measurement. Upon interviewing multiple operators, you learned that some use the “Step” feature while others do not. You took note that NONE of these calipers with a “Step” feature had a “Limited Calibration“ status label… and you were told that the calipers are calibrated in-house. Upon examining the Calibration record, you noticed that the “Step” feature was not included in the calibration results. It appears that the company has not been calibrating the “Step” feature on calipers that support this dimensional measurement.

You observed calipers being used throughout the production process, and observed that the majority of these calipers supported the “Step” dimensional measurement. Upon interviewing multiple operators, you learned that some use the “Step” feature while others do not. You took note that NONE of these calipers with a “Step” feature had a “Limited Calibration“ status label… and you were told that the calipers are calibrated in-house. Upon examining the Calibration record, you noticed that the “Step” feature was not included in the calibration results. It appears that the company has not been calibrating the “Step” feature on calipers that support this dimensional measurement.

Scenario #9 (AS 9100 ONLY): You observed micrometers being used throughout the production process, and you were told that the micrometers are calibrated “in-house”. Upon Reviewing the Calibration Method, you noticed that neither the “flatness” nor “parallelism” of the anvil with the spindle had been calibrated.

Scenario #10 (AS 9100 ONLY): Upon reviewing the company's “register of the monitoring and measuring equipment”, which was maintained in an Excel spreadsheet, you discovered it did not include the:

- calibration or verification method, and

- acceptance criteria.

The company explained that, since all of their calibrations are outsourced, this information can be found in the calibration certificates provided by the external calibration lab.

1 - Micrometer Past its Calibration Due Date

Situation: You discover that the calibration status label on a micrometer is past its expiration (due) date.

(1) Is this a nonconformity? And if so, (2) is it a minor or a major nonconformity?

Answer: While this would appear to be a clear-cut nonconformity, it is not. This condition requires more information.

Is this a nonconformity?

The auditor should understand and recognize that a measuring instrument does not magically go “Out of Tolerance” on a certain date. The calibration interval (cycle) is merely a “risk control” to mitigate:

- the likelihood of an instrument drifting out of tolerance without the user(s) knowledge, and

- the impact/significance that an “Out-of-Tolerance” condition could have resulted in the shipment of nonconforming product(s) due to errors in measurements.

There are many factors that can contribute toward an instrument drifting out of tolerance, which include, but are not limited to:

There are many factors that can contribute toward an instrument drifting out of tolerance, which include, but are not limited to:

- Wear (due to frequent use—which is most common for dimensional measurement devices)

- Use in an environment containing gritty or dirty substances (which will accelerate wear of most dimensional measurement devices)

- Misuse, mishandling, and/or neglect

- Use in an unstable environment (e.g., changes in temperature or humidity, vibration, wind)

- Exposure to harsh conditions, such as corrosive substances or extreme temperature changes (causing rapid degradation of the instrument)

- An unstable electrical source (e.g., “brown-outs) contributing to the degradation of electronic components

- Natural instrument degradation over time (e.g., aging of electronic components)

With this understanding, the auditor should ask whether the company has a “Calibration Management System” (CMS) procedure.

If the answer is yes, then the auditor should review the CMS procedure because many companies provide themselves an automatic extension to the calibration interval/cycle (e.g., 30 days); or a conditional calibration due-date extension based upon management approval (to accept the increased risk). There are many good reasons why a company may choose to extend a calibration interval/cycle. However, the reason is typically to complete existing work in progress—accepting slightly greater risk in exchange for ensuring on-time completion/delivery of the product/service, or completion of a Project. This is perfectly acceptable.

I've seen CMS procedures that state: ”Calibration is due during the month identified on the calibration status label, without regard for the specific day identified.“ and ”Calibration is due within 45 days past the date identified on the calibration status label.“ This certainly satisfies the requirement in ISO 9001/AS9100, sec. 7.1.5.2a relating to specifying an “interval”.

While there is NO requirement in ISO 9001 / AS9100 to have a documented “Calibration Management System” (CMS), ISO 9001 / AS 9100, sec. 7.1.5.1 states:

The organization shall retain appropriate documented information as evidence of fitness for purpose of the monitoring and measurement resources.

Having a CMS procedure allows a company to explain how a calibration interval/cycle is pertinent to addressing:

ISO 9001 / AS 9100, sec. 4.1 “Understanding the context of the organization”: “The organization shall determine external and internal issues that are relevant to its purpose and its strategic direction and that affect its ability to achieve the intended result(s) of its quality management system.” and:

ISO 9001 / AS 9100, sec. 6.1 “Actions to address risks and opportunities”: “6.1.1 When planning for the quality management system, the organization shall consider the issues referred to in 4.1 and the requirements referred to in 4.2 and determine the risks and opportunities that need to be addressed…”

While many quality auditors incorrectly believe that a calibration status label is required by ISO 9001 / AS 9100; that is merely the most popular way in which to address the requirement. ISO 9001 / AS 9100, sec. 7.1.5.2 “Measurement Traceability” states:

When measurement traceability is a requirement, or is considered by the organization to be an essential part of providing confidence in the validity of measurement results, measuring equipment shall be:

b) identified in order to determine their status;

Prior to determining whether a nonconformity exists, the auditor must:

- determine whether there are clearly defined provisions to extend a calibration interval/cycle; then determine whether the company complied with these provisions; and

- determine whether these provisions allow for superseding any sort of calibration status label (or equivalent).

If the company is in compliance with its defined provisions to extend a calibration interval/cycle and the device is ”identified in order to determine“ it's status; then there is no nonconformity.

However, if the company either has no such provisions or has violated its own requirements relating to extending a calibration interval/cycle and/or there are no defined provisions for superseding the calibration due date on a calibration status label (or equivalent), then a clear nonconformity exists (i.e., contrary to 7.1.5.2a, the instrument was not calibrated or verified at its specified interval).

Is this a Minor of a Major Nonconformity?

In order to classify a device that is past its calibration due date as a major nonconformity, the auditor must confirm that this is a ”Nonconformity where the effect is judged to be detrimental to the integrity of the product or service” and/or is a “nonconformity that can result in the probable shipment of nonconforming outputs; and a condition that can result in the failure or reduce the usability of the product or service and its intended purpose.”

How can the auditor conclusively reach this determination?

There are several ways in which to do this. However, if the company is either unwilling or unable to provide any evidence that would mitigate the nonconformity from a major nonconformity to a minor nonconformity, then the auditor should assume that a major nonconformity exists.

Verification during the audit

The auditor “could“ ask the operator to measure a finished part at 2 or 3 different dimensions using the micrometer that is past its calibration due date, and then ask the operator to measure those same dimensions using a micrometer within its calibration interval. If the measurements are the same (or very similar), AND both micrometers indicate that the part is within tolerance, then a Minor nonconformity is justified. However, if there is a significant difference between the readings OR the micrometers disagreed whether the part was within tolerance, then a Major nonconformity is justified.

Assessing the Accuracy Ratio

If you're unfamiliar with “accuracy ratios”, read the linked article titled ”What are Accuracy Ratios“.

If the calculated “accuracy ratio” is greater than 4:1, this could be justification for identifying the nonconformity as a minor.

However, if the “accuracy ratio” is below 4:1, then this could be justification for a major nonconformity.

Calibration during the audit

Depending upon the audit schedule, if the company has the capability to calibrate a micrometer “in-house”, they may opt to do so. If the results of that calibration indicate that the instrument was within tolerance, then a minor nonconformity is justified. However, if the results indicate an “Out-of-Tolerance” condition, then a Major nonconformity is justified.

2 - Use of "For Reference Only" Status Labels

Situation: You observe production personnel in a machine shop verifying products using instruments marked “For Reference Only”. Upon asking why none of these instruments have been calibrated, the company guide informs you that production personnel are required to take the 3 items to the “Quality Inspectors”, located in a lab. Using calibrated instruments, the “Quality Inspectors” verify the first piece from each production run, then a random piece (near the middle of the production run), and then verify the final piece. Therefore, there is no need for the production personnel to have calibrated instruments.

Situation: You observe production personnel in a machine shop verifying products using instruments marked “For Reference Only”. Upon asking why none of these instruments have been calibrated, the company guide informs you that production personnel are required to take the 3 items to the “Quality Inspectors”, located in a lab. Using calibrated instruments, the “Quality Inspectors” verify the first piece from each production run, then a random piece (near the middle of the production run), and then verify the final piece. Therefore, there is no need for the production personnel to have calibrated instruments.

(1) Is this a nonconformity? And if so, (2) is it a minor or a major nonconformity?

Answer: More information is required.

You visit the “Quality Lab” and confirm that Inspectors are using calibrated instruments to inspect 3 pieces from each “job” run. You ask about the statistical basis for the sample size of 3, and the company guide informs you that the Inspectors are performing acceptance sampling using “Table 1” from "Zero Acceptance Number Sampling Plans", Fifth Edition by Nicholas L. Squeglia.

Interviews with Inspection personnel reveal that none of them have, nor are aware of “Table 1” from "Zero Acceptance Number Sampling Plans". The company guide explains that there is no need for the Inspectors to be trained in the use of “Table 1” because, when referencing that Table, the required sample of quantities of 25 and below (for an AQL of 4.0) is always 3. Therefore, it is sufficient to only train the Inspectors to verify the first piece, a random piece (near the middle of the production run), and the final piece for each job.

The guide then explains that, because this is a “job shop” handling small orders, none of their order quantities ever exceed 25. Therefore, calibrated instruments are used to inspect the required 3 samples (indicated in “Table 1” when the AQL is 4.0).

While ISO 9001:2015 does NOT specifically require organizations to “ensure” that sampling methods/plans are ”justified on the basis of recognized statistical principles“ (as AS 9100, sec. 8.5.1c,2 does), it would be arguable as to whether there was a nonconformance with ISO 9001:2015, sec. 8.5.1c.

If you review the completed jobs and find no instance of a quantity exceeding 25, then there is no nonconformity because calibrated instruments are being used on the required sample size (even though this is a poor practice). However, if you find any instances where the order number exceeds 25 (referencing “Table 1” with an AQL is 4.0, the sample size increases to 7 for lot quantities of 26-50) then this becomes a major nonconformity citing ISO 9001/AS9100, sec. 7.1.5.1 “General”, which states:

The organization shall determine and provide the resources needed to ensure valid and reliable results when monitoring or measuring is used to verify the conformity of products and services to requirements.

The above scenario is most often identified as a major nonconformity when a small machine shop using this approach experiences a period of sudden growth… sporadically receiving orders over 25… while their personnel still operate as if each order is 25 or less.

3 - Use of "Special Calibration" Status Labels

Situation: While visiting an inspection area in a powder processing plant, you observe a number of test sieves labeled “Special Calibration” with no calibration due date. Upon asking what a “special calibration” is, the company guide informs you that the test sieves consist of a stainless steel mesh that is more than sufficient to withstand any wear that the product being tested might exert on it. Therefore, the sieves are considered “single calibration devices”. However, the inspectors visually verify that there has not been any breakage or distortion of the mesh prior to each use. The company guide then presents you with a sampling of the small calibration certificates, some of which are several years old, that accompany each test sieve. You see that each test sieve has been calibrated according to ASTM E11, ISO 565 & ISO 3310-1.

Situation: While visiting an inspection area in a powder processing plant, you observe a number of test sieves labeled “Special Calibration” with no calibration due date. Upon asking what a “special calibration” is, the company guide informs you that the test sieves consist of a stainless steel mesh that is more than sufficient to withstand any wear that the product being tested might exert on it. Therefore, the sieves are considered “single calibration devices”. However, the inspectors visually verify that there has not been any breakage or distortion of the mesh prior to each use. The company guide then presents you with a sampling of the small calibration certificates, some of which are several years old, that accompany each test sieve. You see that each test sieve has been calibrated according to ASTM E11, ISO 565 & ISO 3310-1.

(1) Is this a nonconformity?

Answer: No.

ISO 9001 / AS9100, sec. 7.1.5.1, “Measurement traceability” states:

When measurement traceability is a requirement, or is considered by the organization to be an essential part of providing confidence in the validity of measurement results, measuring equipment shall be:

a) calibrated or verified, or both, at specified intervals, or prior to use, against measurement standards traceable to international or national measurement standards; when no such standards exist, the basis used for calibration or verification shall be retained as documented information;

ISO 9001 / AS9100, sec. 7.1.5.1a requires measuring devices to be calibrated EITHER ”at specified intervals“ OR ”prior to use“. Also, note that ”prior to use“ does NOT mandate ”prior to EACH use“. Since the test sieves were calibrated prior to being placed into service, they satisfy the requirements of ISO 9001 / AS9100, sec. 7.1.5.1a.

Companies have taken this same approach toward stainless steel rulers (in a low-wear environment) and graduated glass cylinders (for non-abrasive & non-corrosive materials). Key considerations for these types of devices are:

- no adjustments of the readout are possible,

- the devices are made of material that is resistant to wear (e.g., stainless steel), and

- exposed to extremely low wear (e.g., minimal or no contact).

IF the above test sieves had been used to filter particle sizes of highly abrasive material, then this approach may not have been justified.

Retractable tape measures are NOT a candidate for a “single calibration device” because they include a “hook” at the end that slides inside of a “hook slot”. The metal “hook” is loose because the first inch of the “tape” (aka “blade”) is 1/16” of an inch short, meaning it needs to be pulled taut (within the “hook slot”) to ensure accuracy. Since the “tape” is made of mild steel, the “hook slot” is highly subject to wear from the “hook” repeatedly sliding back and forth. Retractable tape measures are typically calibrated using a

Lixer A-Class Tape Measure Calibration Tool in accordance with the Lixer Tape Measure Calibration Procedure.

4 - Certificate has no NIST "traceability" number(s)

Situation: Upon reviewing a calibration certificate, you discover that it does not contain a NIST “traceability” number(s).

(1) Is this a nonconformity?

Answer: No… because there is no such thing as a NIST “traceability” number. I understand that this may come as quite a shock to some auditors who were taught to always verify that NIST Test numbers appear on calibration certificates. However, much like sasquatch, the NIST “traceability” number is a persistent myth… that came into existence due to a misunderstanding of the NIST “Test Number”; which is used for administrative purposes only. This is supported by official interpretations from NIST.

NIST GMP 13, "Good Measurement Practice

for Ensuring Metrological Traceability”, sec. 1.1 (3rd para.):

“Test numbers issued by NIST should not be used nor required as proof of the adequacy or traceability of a test or measurement. Having a NIST number does not provide evidence that the measurement value provided by another organization has the property of metrological traceability.”

And

NIST GMP 13, "Good Measurement Practice

for Ensuring Metrological Traceability”, sec. 1.6.3, “Use of, or reference to, official test numbers of a national metrology institute.”:

“Having an authentic test number does not provide assurance or evidence that the measurement value provided by another organization is traceable. Not only must there be an unbroken chain of comparisons, but each measurement must be accompanied by a statement of uncertainty associated with the value. Test report numbers should not be used nor required as proof of the adequacy or traceability of a test or measurement. National and international documentary standards for test and measurement quality requirements, such as ISO 10012, ISO/IEC 17025, and the ISO 9000 series, provide guidance for assessing metrological traceability and do not require the use or reporting of specific test numbers to establish metrological traceability.”

This is further noted in the:

NIST "Supplementary Materials for NIST Policy Review”:

I.B.4 Is a NIST Test Report Number necessary and/or sufficient evidence of traceability?

“Test report numbers issued by NIST are intended to be used solely for administrative purposes. Although they are often used to uniquely identify documents which bear evidence of traceability, test report numbers themselves do not address the issues listed in I.B.1 above, and should not be used nor required as the sole proof of traceability.”

For a more detailed discussion of this topic, read: NIST Traceability Numbers - The Sasquatch of Metrology

5 - Metrological Traceability Loop

Situation: You observed a micrometer being used in the production area. Upon reviewing its calibration record, you see that it was calibrated in-house using a set of Gage blocks. In order to assure metrological traceability, you review the calibration record for the Gage blocks and discover that they too, had been calibrated in-house… using a Precision Micrometer. Continuing to investigate metrological traceability, you review the calibration record for the Precision Micrometer and discover that it had been calibrated in-house… using that same Gage block set. The Precision Micrometer and Gage blocks are being used to calibrate each other.

(1) Is this a nonconformity? And if so, (2) is it a minor or a major nonconformity?

Answer: This is a major nonconformity.

ISO 9001 / AS9100, sec. 7.1.5.1, “Measurement traceability” states:

When measurement traceability is a requirement, or is considered by the organization to be an essential part of providing confidence in the validity of measurement results, measuring equipment shall be:

a) calibrated or verified, or both, at specified intervals, or prior to use, against measurement standards traceable to international or national measurement standards; when no such standards exist, the basis used for calibration or verification shall be retained as documented information;

Since these two devices are being used to calibrate each other, they've lost metrological traceability to “measurement standards traceable to international or national measurement standards”.

As the Gage blocks wear, that gradual change will be transferred to the Precision Micrometer during its calibration. As these two devices are alternated in their use to calibrate each other, the Precision Micrometer will be adjusted to correlate with the Gage blocks. Over time, the two will drift further away from their true values… and, more significantly, this “drift” is being flowed down to all of the other instruments calibrated using the Gage blocks. Consequently, this is a Major nonconformity because:

- there is a significant doubt that effective process control is in place to ensure that products or services will meet specified requirements;

- the effect of this nonconformity is detrimental to the integrity of the product or service;

- this nonconformity indicates a total breakdown of a system to meet an ISO 9001 or AS9100-series standard requirement;

- this nonconformity could result in the probable delivery of a nonconforming product or service

6 - Using a Precision Micrometer to calibrate Gage Blocks

Situation Similar to the above scenario, but at a different company, you discover that the company is using a Starrett W733.1XFL-1 Wireless Electronic Micrometer to calibrate their class AS-2 Gage Block set (stainless steel, rectangular 0.010“ to 2”). While the Starrett W733.1XFL-1 Micrometer Calibration certificate contains minimal information, you notice that it has a digital display with a resolution of 0.00005“ (50 μin.). To verify that the Test Accuracy Ratio (T.A.R.) is sufficient, you see that the Gage Blocks Calibration Certificate indicates an accuracy of ±10 μin. (±0.00001”).

Gage Block classes and tolerances are specified in ASME B89.1.9, "Gage Blocks".

(1) Is this a nonconformity? And if so, (2) is it a minor or a major nonconformity?

Answer: More research would be required in order to make this determination.

You would need to access and review the specifications for the Starrett W733.1XFL-1 Wireless Electronic Micrometer. Upon doing this, you would discover that the accuracy of this particular micrometer is much less than its resolution. Its stated accuracy is ±0.0001“. If the company assumed that the resolution and the accuracy were the same, they would have been wrong.

In calculating the Test Accuracy Ratio (T.A.R.), we take the Gage Block tolerance of 0.00001” and divide it by the accuracy of the Starrett W733.1XFL-1 micrometer, which is actually stated in its specifications as 0.0001“ (±100 μin.). This results in: 0.00001 ÷ 0.0001 = 0.1 (or 1:10). This means that the Gage blocks are 10x more accurate than the micrometer! This ratio is the inverse of what we would like to have seen!

A major nonconformity is clearly justified.

7 - Relocted Weighing Scale

Situation A weighing scale was calibrated at a different location and then shipped to its current location. And there is no evidence that the calibration was repeated to verify that the weighing scale remained in-tolerance following the move.

(1) Is this a nonconformity? And if so, (2) is it a minor or a major nonconformity?

Answer: The proper follow-up question is whether there is any documentation or record indicating that this specific model of weighing scale is immune from possible effects of

- the difference in local gravity acceleration,

- variation in environmental conditions, and/or

- mechanical and thermal conditions during transportation that likely altered the performance of the instrument?

If the answer is no, and there is no other evidence to the contrary, this should be a Major nonconformance because we must assume that there is a high degree of probability that product quality (or quantity) has been affected. However, if the auditee has appropriate test weights (mass) providing an acceptable calibration accuracy ratio, and can verify that the weighing scale is in-tolerance during the audit, then this can be graded as a Minor Nonconformity (for not having a record of calibration AFTER the weighing scale was re-located).

The basis for this nonconformity is found in EURAMET Calibration Guide No. 18 (Version 4.0 (11/2015)), page 5, which states:

4.1.2 Place of calibration

Calibration is normally performed in the location where the instrument is being used.

If an instrument is moved to another location after the calibration, possible effects from

Moving the instrument after calibration should therefore be avoided, unless immunity to these effects of a particular instrument, or type of instrument has been clearly demonstrated. Where this has not been demonstrated, the calibration certificate should not be accepted as evidence of traceability.

8 - Fitness for Purpose #1

Situation: You observed calipers being used throughout the production process; and observed that the majority of these calipers supported the “Step” dimensional measurement feature. Upon interviewing multiple operators, you learned that some use the “Step” feature while others do not. You took note that NONE of these calipers with a “Step” feature had a “Limited Calibration” status label… and you were told that the calipers are calibrated in-house. Upon examining the Calibration record, you noticed that the “Step” feature was not included in the calibration results. It appears that the company has not been calibrating the “Step” feature on calipers that support this dimensional measurement.

Situation: You observed calipers being used throughout the production process; and observed that the majority of these calipers supported the “Step” dimensional measurement feature. Upon interviewing multiple operators, you learned that some use the “Step” feature while others do not. You took note that NONE of these calipers with a “Step” feature had a “Limited Calibration” status label… and you were told that the calipers are calibrated in-house. Upon examining the Calibration record, you noticed that the “Step” feature was not included in the calibration results. It appears that the company has not been calibrating the “Step” feature on calipers that support this dimensional measurement.

(1) Is this a nonconformity? And if so, (2) is it a minor or a major nonconformity?

Answer: This is a Major nonconformity.

ISO 9001 / AS 9100, sec. 7.1.5.1 states:

The organization shall retain appropriate documented information as evidence of fitness for purpose of the monitoring and measurement resources.

The auditor should have sufficient knowledge in using a caliper to understand that the “Step” feature can be “Out of Tolerance” due to wear on a point not otherwise calibrated. By recognizing that this feature had not been included in the calibration process, the auditor can justify a Major nonconformity because:

- there is a significant doubt that effective process control is in place to ensure that products or services will meet specified requirements;

- the effect of this nonconformity is detrimental to the integrity of the product or service;

- this nonconformity could result in the probable delivery of a nonconforming product or service

While ASME B89.1.14, "Calipers" & ISO 13385-1, "Geometrical product specifications (GPS) — Dimensional measuring equipment Part 1: Callipers; Design and metrological characteristics" both discuss the “Step” feature, T.O.33k6-4-552-1, "Calibration Procedure for Calipers-General" describes how to calibrate the “Step” feature on page 5.

While not all calipers have the ability to measure “Step Dimensions”, the vast majority of the calipers I’ve seen do. When it exists, this feature must be included in the calibration process. The below video from the Mitutoyo Metrology Training Lab describes, step-by-step, how a caliper is “supposed” to be calibrated.

A significant problem in the quality auditing profession is that many auditors lack the technical knowledge and expertise to recognize when a common measuring instrument, such as calipers, has not been calibrated to its full capabilities.

This knowledge is essential because many companies who choose to calibrate instruments “in-house” (typically as a cost-savings measure) often fail to properly train their personnel in “how” to properly calibrate these instruments.

9 - Fitness for Purpose #2 (AS9100 ONLY)

Situation: You observed micrometers being used throughout the production process; and you were told that the micrometers are calibrated “in-house”. Upon reviewing the company's “register of the monitoring and measuring equipment” you discovered that they identify a calibration/verification method. Upon reviewing that calibration/verification method, you noticed that neither the “flatness“ nor “parallelism” of the anvil with the spindle had been calibrated. You confirm this by examining calibration records for a sampling of the micrometers you observed in use. None of these records indicate that the “flatness” or “parallelism” of the anvil with the spindle had been calibrated.

(1) Is this a nonconformity? And if so, (2) is it a minor or a major nonconformity?

Answer: This is a major nonconformity.

ISO 9001 / AS 9100, sec. 7.1.5 states:

…the organization shall retain appropriate documented information as evidence of fitness for purpose of the monitoring and measurement resources.

The auditor should have sufficient knowledge of using a micrometer to understand that a failure to verify the anvil and spindle Flatness & Parallelism could result in measurement errors due to wear patterns OR dirt / small metal chips embedded in the anvil or spinal. Therefore, checking the flatness & parallelism should be the first step in calibrating a micrometer.

In the video below, a metrologist from Mitutoyo discusses using an optical flat for verifying both micrometer anvil Flatness & Parallelism at: 1:44 to 2:15 (Intro) and 17:50 to 24:55 (detailed discussion).

ASME B86-1-13, “Micrometers”, page 10 “Nonmandatory Appendix C - Test Methods” specifies verification of flatness using an optical flat and a monochromatic light source (in C-2.2). T.O. 33k6-4-15-1 "Calibration Procedure for Micrometers Micrometer Heads and Depth Micrometers-General” also specifies verification of flatness using an optical flat and a monochromatic light source (in 4.1.1)

ASME B86-1-13, “Micrometers” describes using a precision sphere (metal ball) to verify Parallelism (in C-2.4) and using gage blocks (in C-2.5) for larger micrometers (i.e., micrometers designed for larger measurements where the anvils do not touch). It also describes how you can use optical flats to verify Parallelism (in C-4.6).

Auditors should take note that Mitutoyo, ASME & the USAF all agree that calibration of micrometer anvil Flatness & Parallelism is important. And should watch the below video from Mitutoyo describing, step-by-step, how a common outside micrometer is “supposed” to be calibrated.

10 - The Incomplete "Register" (AS9100 ONLY)

Situation: Upon reviewing the company's “register of the monitoring and measuring equipment”, which was maintained in an Excel spreadsheet, you discovered it did not include the:

- calibration or verification method, and

- acceptance criteria.

The company explained that, since all of their calibrations are outsourced, this information can be found in the calibration certificates provided by the external calibration lab.

(1) Is this a nonconformity? And if so, (2) is it a minor or a major nonconformity?

Answer: This is NOT a nonconformance.

The following question was submitted to IAQG (through OASIS) for an official clarification (i.e., interpretation):

The 9100:2009-series verbiage require a calibration register and the definition of processes for calibration/verification (including equipment type, ID, frequency, methods and acceptance criteria), but didn’t seem to require them to be one in the same. The 9100:2016 standard appears to mandate these definitions be incorporated into the register itself, as opposed to just being defined. Is this required to be taken literally that the register is required to have this information is absolute?

The IAQG "Official" AS 9100:2016 Series Clarifications contains the following response:

The 9100-series clause 7.1.5.2 was not intended to force organizations to have the register specifically include the “equipment type, unique identification, location, and the calibration or verification method, frequency, and acceptance criteria.” The organization is required to have this information for equipment listed on the calibration register but not specifically in the register.

To further clarify, if the documented information is available, and not necessarily in the “register”, IAQG considers the requirement to have been adequately addressed.

The entire IAQG "Official" AS 9100:2016 Series Clarifications is available online.

To contact the SDR (Sector Document Representative) for AS 9100-series in your region, Reference: Contact info. for AS Standard IDRs (IAQG Document Representatives) & SDRs (Sector Document Representatives) - Updated Oct. 2021.